Subvocal speech recognition presents itself as an alternative communication method to overcome the limitations of augmentative and alternative communication.

EMG technology has the potential to analyze the complex muscular architecture of the face and neck for subvocal speech recognition research by extracting the signals and utilizing them for automated speech recognition.

A novel communication system that translates biosignals from articulatory muscles into speech using a personalized, digital voice has been shown in a proof-of-study concept.

Your voice is hugely personal to you. As humans, we connect and interact by communicating with one another. This involves exchanging ideas and emotions through methods such as speech, writing, and gestures. Communication is a fundamental human right. As humans, we build and maintain both professional and social relationships through communication. However, there are still key challenges toward ensuring that ALL individuals, including those with complex communication needs, have access to this fundamental right.

In the US alone, it is estimated that 1.3% of the population (≈ 4 million people) are unable to communicate reliably with their natural speech. This reduced ability results in the natural voice having to be supplemented or replaced by augmentative and alternative communication (AAC) strategies. However, AAC systems are not without their limitations. Restrictive usability and the lack of natural speech characteristics have led current AAC users to feeling a loss of identity, a lack of confidence, social isolation, and reduced intimacy.

To overcome limitations of AAC, subvocal speech recognition (SSR) has been focused upon as an alternative communication method. Surface electromyography (EMG) presents a particularly exciting prospect within SSR based research. Through using the small detection heads of EMG technology, electrical signals can be analyzed from the complex muscular architecture of the face and neck. By optimizing machine learning techniques, specific features of the sEMG signal can be extracted by utilizing advancements in automated speech recognition (ASR).

Research led by Dr. Jennifer Vojtech (Delsys Inc./Altec), and colleagues from industry and academia, has looked to create a novel communication system designed to translate biosignals from articulatory muscles into speech using a personalized, digital voice. Their proof-of-concept study demonstrated the feasibility of using sEMG-based alternative communication for not only word recognition, but also for the recognition of the personal features of speech which define our daily interactions.

How are patients currently supported through AAC techniques?

The field of AAC is broad. Jennifer noted “AAC is an umbrella term. It encompasses simple forms of communication such as manual gestures and facial expressions to high-tech forms like speech-generating devices. However, it is commonly accepted that the number of AAC users is growing.” Increases could be due to the availability of technology, AAC awareness and increases in the number of individuals with complex communication needs.

For those who choose to augment or replace their voice through technology; there are several currently available devices.

Tracheoesophageal prosthesis (TEP)

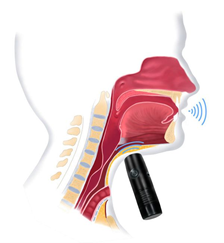

Electrolarynx (EL)

Esophageal speech (ES)

As is common within human nature, different users prefer different solutions. Current users of the available devices described the solutions as “difficult to care for, unintelligible, tedious and robotic sounding”. Jennifer added “even though select individuals were able to gain some prosodic control through EL devices, patients were reluctant to use the technology as it is too physically and mentally demanding”.

In the end, users will choose the method that will help them to communicate, whether it is perfect for them or not. But why should we be satisfied by allowing technologies to be used that present an imperfect solution to an already adverse situation.

What advantages does sEMG present for SSR?

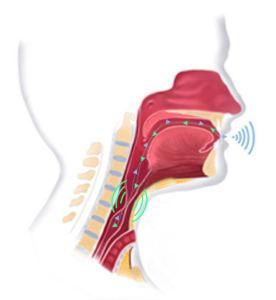

Unlike many of the current AAC devices, sEMG-based SSR presents a hands-free tool to allow users to communicate without the added physical reliance on their hands. The wireless nature of sEMG technology means sensors can be fitted to the specific articulatory muscles of interest, as shown in figure 1.

Figure 1 – Depiction of sensor configurations targeting (1) anterior belly of the digastric, mylohyoid, and geniohyoid; (2) platysma, mylohyoid, and stylohoid; (3, 4) platysma, thyrohyoid, omohyoid, and sternohyoid; (5) zygomaticus major and/or minor, levator labii superioris, and levator anguli oris; (6, 7) orbicularis oris; and (8) mentalis.

The sEMG sensors produce high-fidelity data. When speaking about the signal output from the sensors, Jennifer made clear “it is important to note that the sEMG amplitude may vary substantially across speaker, sensor location, and phonemic content but also due to the individual way in which patients stress phrases or words.” As shown in figure 2, when the patient stresses particular words in the phrase, there is a notable increase in the signal amplitude, particularly from the ventral neck muscles (sensors 1-4 as shown in figure 1).

Figure 2 – Example of raw surface electromyographic signals obtained from one speaker with laryngectomy from the token “Mom strongly dislikes appetizers.”

By tuning the custom algorithm to look for not only lexical content (via similarities in sEMG features in the presence of prosodic modulations), but also for categorized phrasal stress (via differences in sEMG features in repetition of the same phrase), the team were able to generate a personalized, digital voice!

How is the current research looking to advance SSR?

Speech, being the most natural form of human communication, has always presented itself as an exciting modality for human-machine interactions.

Whilst prior work has shown the ability to recognize words and phrases at an average accuracy rate of 90% using sEMG based techniques, the prosodic features of speech were still lacking. When speaking of the direction for future developments within the field, Jennifer stated “the real focus of the next generation research into sEMG based AAC, and of the current work, is making the recorded signals sound like how the user wants it to sound. Within user feedback, one word was more prevalent than any other about what they want: EXPRESSION!”

Since each user is unique in how they use prosody to convey a specific meaning, the team used a set of sEMG features to create an individualized model for discerning message content. The sEMG features include:

By tuning in on the specific feature extractions from the sEMG signal, the algorithm can be adaptable to the person rather than the person having to adapt to the algorithm. As with all human-machine interactions, the HUMAN must be the focal point of the application.

What does the future hold for AAC users?

Whilst the current research is a major step forward in the ability of sEMG based alternative communication to recognize the personalized aspects of speech, there is still a lot of work to be done.

Jennifer reflected on how the team are still looking to progress the research and their knowledge. “Whilst the present work demonstrated the ability to create a more natural sounding voice, the current sEMG-based ASR technique does not provide the same real-time functionality for speech production that an EL device does. Coupling the two components of word/prosody recognition and the text-to-speech processing into a near real-time solution is the next big direction for the upcoming research.

“A supplementary goal of future research is to focus on extracting more specific attributes of prosody. Elements such as increased intonation, perception of loudness and temporal variation are crucial to offering the users a more personalized experience through next generation AAC devices.”

The team at Delsys and Altec are driven by their passion to create tangible differences in the lives of users who currently rely upon AAC technologies. The importance of the work cannot be underestimated in the pursuit of giving a voice back to those without one.